Why Behavioral Interview Prep Frameworks Succeed or Fail for Software Engineers at FAANG Companies

Key patterns from interviewer insights

Summary

The behavioral interview is the round where technically strong software engineers fail most often, not due to lack of experience, but because they have never practiced converting real work into a clear, structured spoken narrative. FAANG companies use structured rubrics, calibrated by seniority level, that evaluate specific behavioral signals, not general storytelling ability. Frameworks like STAR succeed when candidates decode the company’s actual rubric and build versatile, level-appropriate stories; they fail when candidates treat them as scripts for memorized answers rather than tools for presenting evidence.

If you’d like a copy of the article in PDF format, you can download it using the link below.

How Each FAANG Company Actually Evaluates Behavioral Answers

Understanding that each company has a distinct rubric is the single most important insight for preparation. A good story for Amazon may not satisfy Google’s expectations, and vice versa.

Meta — The “Jedi” Round

Meta’s dedicated behavioral round, internally called the “Jedi” interview, typically runs 45 minutes and probes eight focus areas: motivation, proactivity, ability to work in unstructured environments, perseverance, conflict resolution, empathy, growth and communication. The interviewer is simultaneously using these signals to calibrate the candidate’s seniority level: IC4 (junior), IC5 (senior), or IC6 (staff).

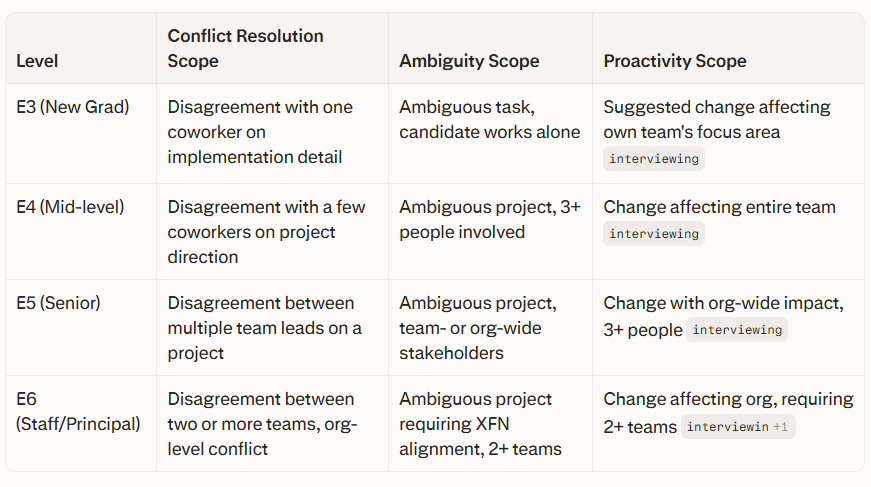

The seniority calibration hinges on the scope of the story, not the storytelling quality alone. Meta explicitly maps impact scope to levels:

It is often on the behavioral interview alone that a candidate is down-leveled during deliberations. Candidates who tell the right story but at the wrong scope lose the level, and sometimes lose the offer entirely.

Meta’s three key Jedi signals are self-awareness (do you own your failures, or blame the team?), ambiguity navigation (can you execute without defined requirements?), and pacing (do you optimize for iterative shipping, or get paralyzed by perfectionism?).

Amazon — The Leadership Principles Engine

Amazon is the most behaviorally rigorous FAANG company. Behavioral questions appear throughout the entire interview loop, from recruiter screen to final on-site, and every question is designed to map onto one or more of Amazon’s 16 Leadership Principles (LPs). The 16 LPs include Customer Obsession, Ownership, Invent and Simplify, Are Right A Lot, Bias for Action, Hire and Develop the Best, Insist on the Highest Standards, Think Big, Earn Trust, Dive Deep, Have Backbone, Disagree and Commit, Deliver Results, and others.

A critical but often missed element is the “Bar Raiser,” an independent interviewer who can veto a hire regardless of other interviewers’ recommendations. The Bar Raiser calibrates whether the candidate truly raises Amazon’s bar, not just whether they performed adequately.

The most common way candidates fail Amazon behavioral rounds is by not handling follow-up questions: Candidate tells some story about how they saved the day from some issue in prod. Interviewer says “awesome, and what did you do to prevent it from happening again?” If the candidate doesn’t have a good answer to the follow-up, they just failed that interview no matter how well they solve the coding problems. Amazon expects a postmortem mindset: systematic prevention, not just incident response.

Google — Googleyness and Leadership

Google evaluates candidates on four attributes: general cognitive ability, leadership, role-related knowledge, and “Googleyness.” Googleyness is Google’s term for the cultural traits that predict success in its collaborative, fast-moving environment. Specifically, Google interviewers assess comfort with ambiguity, bias toward action, collaborative mindset, and intellectual humility.

Candidates can expect 2-4 behavioral questions per interview round; rounds focused specifically on Googleyness and leadership may have 5-6 questions, each with follow-ups. Google looks for emergent leadership: evidence of influence through persuasion, data, and collaboration rather than through hierarchy or formal authority.

The Googleyness dimension that catches candidates off guard is intellectual humility, i.e., telling a story where you changed your mind based on evidence from someone more junior, or where you genuinely learned from failure without minimizing it.

Apple — 67 Competencies, Contextual Fit

Apple draws from a library of 67 behavioral competencies and will assess 6–16 of them during a hiring loop. Behavioral interviewing accounts for approximately one-third of the Apple interview process. The specific competencies assessed depend on the role, making generic preparation less effective than role-specific mapping.

Apple emphasizes product thinking, creative problem-solving, and deep collaboration more than the other FAANG companies. Strong answers showcase ownership (initiative taken even in ambiguous situations), measurable impact (metrics, user feedback, or stakeholder outcomes), creativity (challenging assumptions), and cross-functional execution.

Netflix — The Culture Memo as Rubric

Netflix’s culture interview is not primarily a storytelling exercise. It is a high-signal behavioral screen focused on decision-making quality, ownership, candor, and impact in a “freedom and responsibility” environment. Interviewers are not checking for personality match; they are validating whether a candidate’s working style fits an environment with high autonomy, fast tradeoffs, direct feedback, and very strong expectations for results.

A useful mental model for Netflix: (1) Context clarity: can you frame ambiguity, constraints, and stakeholders clearly? (2) Judgment: how did you choose a path, what risks did you take, and why? (3) Accountability: what did you own end-to-end and what did you learn? (4) High impact with integrity: measurable outcomes, customer focus, and trust-building behaviors like transparency and candor. Netflix expects candidates to have read and internalized the Netflix Culture Memo before the interview.

Why Behavioral Prep Frameworks Fail: 10 Recurring Patterns

Pattern 1: Type Mismatch — Returning a System Diagram Instead of a Story

When asked about a conflict, you explain the architecture. The interviewer wanted Story<PersonalDecision> and you returned TechnicalOverview<SystemDesign>. Engineers instinctively default to technical explanations. Interviewers are explicitly looking for personal decision-making, interpersonal dynamics, and judgment, not architecture walkthroughs. This type mismatch is the most frequent failure mode for engineers new to behavioral prep.

Pattern 2: Never Running the Code — No Verbal Practice

There is a massive difference between reading code and executing it. Same with behavioral answers. In your head, the story compiles cleanly. Out loud, you hit null pointers everywhere. Most candidates who fail behavioral rounds have mentally rehearsed their stories but never spoken them aloud under simulated pressure. Verbal delivery reveals gaps: unclear starting points, excessive context or results that get “garbage-collected” before the interviewer hears them.

Pattern 3: The “We” Problem — Collective Pronouns Hide Individual Contribution

Answers filled with “we identified the issue,” “we implemented the solution,” and “we shipped it on time” provide no signal about what the candidate actually did. Interviewers cannot assess whether the candidate led the charge or attended meetings. The fix is deliberate use of “I” statements with specific attribution: “While my teammate handled the frontend, I refactored the API endpoints and proposed the caching strategy.”

Pattern 4: Only Handling the Happy Path

Follow-ups are the edge cases of behavioral interviews. They are where the real evaluation happens. If you only test the happy path, you will fail in production. Candidates who prepare for tellMeAboutLeadership() but not for whyDidntYouEscalateSooner() or whatWouldYouChangeNow() collapse under probing. This is especially critical at Amazon, where Bar Raiser follow-ups are designed specifically to pressure-test stories.

Pattern 5: Level Mismatch — Scoping Stories Too Small

Senior and staff candidates frequently tell stories appropriate for a mid-level engineer. A senior engineer describing a conflict between two coworkers on an implementation detail — a perfectly valid E4 answer — signals E3 scope at the E5 bar. The scope of stakeholders, teams involved, and business impact all signal level. An E5 answer requires multi-team involvement and org-level impact; an E6 answer requires cross-org strategic influence and multi-team coordination.

Pattern 6: Memorizing Questions Instead of Building Versatile Stories

A mental spreadsheet mapping specific stories to specific questions fails because behavioral questions are incredibly varied. When the actual question differs slightly from the memorized version, candidates stall, give mismatched answers or sound scripted. Preparation should focus on building 8–12 versatile “story fixtures” — one per major theme — that can flex across dozens of questions.

Pattern 7: STAR Imbalance — Over-investing in Situation and Task

STAR fails in practice when candidates over-allocate time to setup. STAR often causes candidates to ramble on the least important part of the answer: the background. The Action and Result sections are where the evidence actually lives. Interviewers lose interest during extended Situation framing and miss the payoff entirely.

Pattern 8: No Measurable Results

“The project was successful and everyone was happy” provides zero evaluative signal. FAANG interviewers are trained to probe for quantifiable impact: “What was the quantifiable impact? How did this affect the business, customers, or team?” Even approximate metrics are significantly better than vague qualitative claims. Examples of strong result framing: “downtime dropped by approximately 80%,” “user engagement increased 14%,” “we regained 8% market share within the first quarter.”

Pattern 9: Treating Behavioral as Subjective and Not Worth Preparing

This is the most destructive myth because it stops candidates from preparing at all. FAANG companies have invested heavily in making behavioral evaluations structured and consistent: training interviewers, using rubrics and running debrief committees. Many candidates spend dozens of hours on LeetCode and zero hours on behavioral stories; this alone makes them statistically more likely to fail.

Pattern 10: Ignoring Company-Specific Value Mapping

A story that demonstrates Amazon’s “Earn Trust” LP perfectly may not map to Google’s Googleyness dimension or Meta’s “growing continuously” competency. Generic STAR answers that do not explicitly connect to company values leave the interviewer doing guesswork. Strong candidates research the company’s specific behavioral framework and map each story to it before the interview.

Why Behavioral Prep Frameworks Succeed: What Interviewers Consistently Reward

Build Versatile Story Fixtures, Not a Question Map

Eight to ten carefully selected stories from your career — covering leadership, conflict, failure, ambiguity, tight deadlines, cross-team work and technical tradeoffs — can cover approximately 40 behavioral questions. The key is selecting stories that are rich enough to answer multiple question types and adaptable enough to emphasize different dimensions based on what the interviewer is probing.

Decode the Rubric Before You Prep

Every behavioral question is hunting for specific evidence. Understanding what the interviewer wants changes everything. Effective preparation starts with reading the company’s public documentation — Amazon’s LPs, Netflix’s Culture Memo, Google’s re:Work resources on Googleyness — and then working backwards to identify which stories in your library satisfy each rubric dimension.

Quantify Everything

Strong STAR answers include measurable outcomes as a default, not as an afterthought. Quantification signals credibility and scope: “We launched two weeks ahead of schedule, which led to a 14% increase in user engagement and helped us regain 8% market share within the first quarter.” Even in qualitative stories about conflict or growth, anchoring the impact in business terms (revenue, reliability, team velocity) elevates the answer.

Add STAR-L: Make Learning Explicit

For failure, growth, and mistake questions, which all major FAANG companies ask, adding an explicit “Learnings” layer after the Result is critical. Interviewers are evaluating three things in failure questions: whether you take full responsibility, whether you extract concrete lessons, and whether you built systematic safeguards to prevent recurrence. Candidates who end their story at “the outcome was X” leave out the most important evaluative evidence.

Practice Out Loud Against Follow-Ups

Mock interviews with a peer, a coach, or a recording are the highest-value behavioral prep activity. The goal is not to achieve a perfect rehearsed answer, but to identify where answers break down under pressure: where you over-explain, where results disappear, and where follow-up questions expose thin coverage. Specifically practicing the edge cases — “What would you do differently?” “Why didn’t you escalate sooner?” “How did you prevent it from happening again?” — separates candidates who merely pass from those who give strong hire signals.

Calibrate Story Scope to the Target Level

Before an interview, map each story to its approximate scope level. If you’re targeting a senior role, every story should involve multi-person coordination, cross-team stakeholder management or org-level business impact. If you’re targeting staff or principal, stories should demonstrate org-wide or XFN influence with measurable strategic outcomes and independent scope creation. Scope calibration is the single most reliable way to avoid being down-leveled.

Universal Cross-FAANG Success Patterns

Across all five companies, experienced interviewers consistently reward the following behavioral signals regardless of company-specific rubric:

Influence without authority — building consensus through data and shared goals, not hierarchy

Disagree and commit — respectfully challenging decisions, then committing fully once a direction is set

Ownership under ambiguity — proactively defining scope and driving progress without being told what to do

Quantified business impact — connecting engineering actions to revenue, reliability or customer outcomes

Postmortem mindset — systematic prevention after failure, not just incident recovery

Intellectual humility — acknowledging limitations, seeking feedback and demonstrating growth from both success and failure

Conclusion

Behavioral interview prep frameworks succeed when they are used as evidence-gathering tools, helping candidates retrieve and present specific, calibrated proof of past behaviors aligned to a company’s rubric. They fail when candidates use them as scripts for generic storytelling. The most durable preparation strategy is to build a library of versatile, quantified, level-appropriate stories; decode each company’s specific evaluation framework; and practice until verbal delivery matches the internal clarity of the story. The behavioral round is not a personality contest. It’s a structured evidence review, and it rewards candidates who prepare for it with the same rigor they apply to technical rounds.